I’ve been using my AI goggles for almost a year now. In the beginning I plodded along with my same boring workouts. Except for the first time, I could visually see how boring the sets were in the recorded results. I also noticed that the set lengths were too long resulting in a gradual time decrease.

Eventually, I succumbed to the pressure of the head coach subscription. This definitely improved my experience with the goggles, even though I felt a little turned off that so many cool things were inaccessible without the subscription. For example, I couldn’t upload or save workouts to the goggles. I also missed out on tips and insights related to my workouts and some customization.

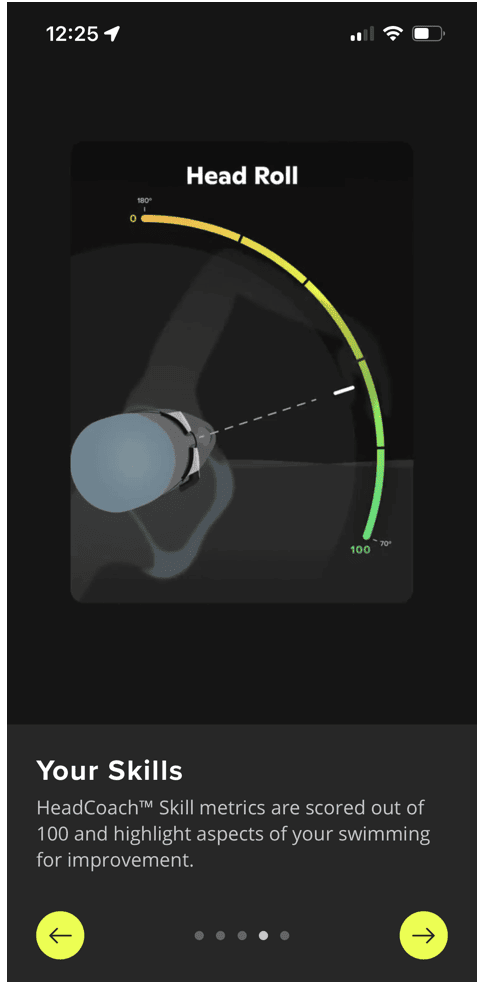

Since subscribing, I noticed my scores increasing. In the first couple months they climbed several points. Lately, they’ve hit a plateau. The goggles offer some explanations for this claiming as the workouts get harder, it takes time for my skills, technique, and endurance to catch up. However, much to my surprise, the goggles missed probably one of the most important and obvious insights. The time at which I go swimming impacts my score, sometimes by almost 10 points!

I felt surprised when I came to this realization. Despite all the fancy graphs, dashboards, timings, and measurements the goggles provide, the biggest difference in my swim performance is based on the time of day. This is not something tracked, measured, or even observed by the goggles. I noticed it by scrolling through the latest dashboards.

Fortunately, I mostly swim at the same times every week. I usually do one evening swim on Wednesdays. This is a tough day because it’s one of my in-office days. At the end of the day, I drive home in rush hour traffic, around 45 minutes. I do a quick turnaround at home to grab my swim gear and instrument before heading to the gym followed by a 2.5 hour rehearsal. Needless to say, my swim scores are usually lower for this swim, or any weekday evening swim, in general.

The second swim is earlier in the day on weekends. The gym closes at 8 these days, so I accidentally discovered earlier times work better for my energy and performance levels. While the technology helped to provide the data, it was simple human observation that made the connection. We’re not ready to be replaced by machines yet.